Machine learning (ML) is a dynamic field of artificial intelligence (AI) that focuses on the development of algorithms allowing computers to learn from and make predictions or decisions based on data. Rather than being explicitly programmed to perform a task, these algorithms use statistical techniques to learn patterns from data.

Defining Machine Learning

At its core, machine learning is about teaching computers to learn from experience. Just as humans learn from experiences, computers can learn from data. For instance, by browsing through thousands of cat photos, a machine learning model can learn to identify a cat in a new photo it has never seen before.

| Term | Description |

|---|---|

| Algorithm | A set of rules or steps used to solve a problem. |

| Model | The output of a machine learning algorithm trained on data. A model is used for making predictions. |

| Training | The process of feeding data into a machine learning algorithm to train a model. |

| Prediction | The output of a trained model when given new unseen data. |

The Significance of Machine Learning

The power of machine learning lies in its applications. From voice assistants like Siri and Alexa to recommendation systems on Netflix and Amazon, machine learning algorithms are behind many modern technologies we use daily. They help in automating tasks, providing personalized experiences, and even in complex applications like predicting diseases or stock market trends.

The Evolution and History of Machine Learning

The concept of machines learning from data is not new. The term “machine learning” was coined in 1959 by Arthur Samuel, an IBM employee and pioneer in the field of computer gaming and artificial intelligence. However, the last two decades have seen an explosive growth in the field, thanks to the availability of big data and the advancement in computational power.

The Core Concepts of Machine Learning

To truly grasp the essence of machine learning, it’s crucial to understand its foundational concepts. These concepts form the backbone of many algorithms and techniques in the field.

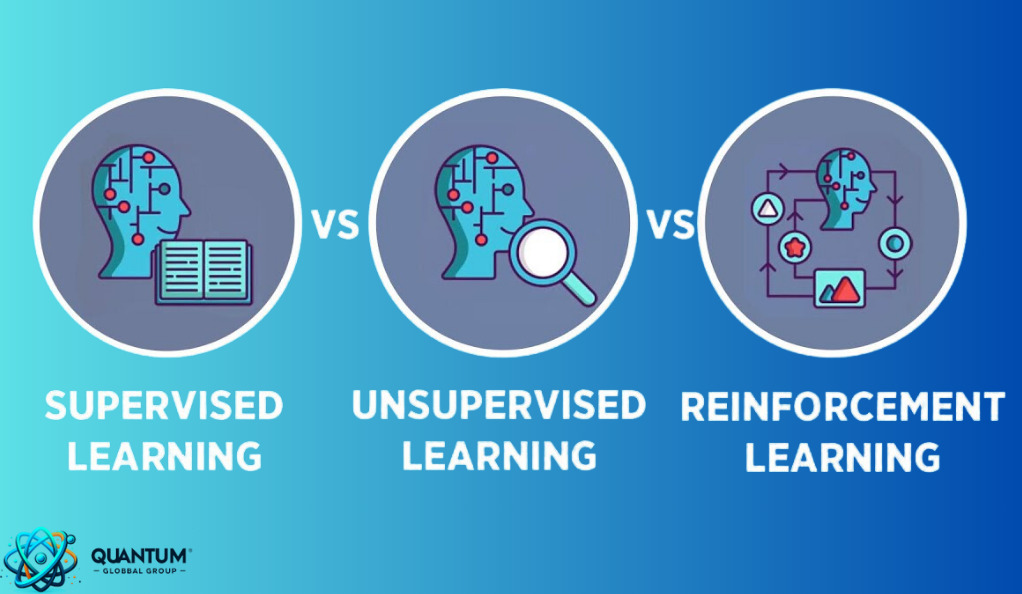

Supervised vs. Unsupervised vs. Reinforcement Learning

Machine learning can be broadly categorized into three main types based on the nature of the learning “signal” or “feedback” available to the learning system:

- Supervised Learning: This is the most common technique. In supervised learning, the algorithm is trained on a labeled dataset, meaning each example in the dataset is paired with the correct answer. For instance, if the task is to classify images of animals, the training data would consist of images of animals labeled with their respective species.

- Unsupervised Learning: Unlike supervised learning, in unsupervised learning, the algorithm is given data without explicit instructions on what to do with it. The system tries to learn the patterns and the structure from the data without any labeled responses to guide the learning process. Clustering and association are two types of problems solved by unsupervised learning.

- Reinforcement Learning: This type of learning is inspired by behavioral psychology and involves agents who take actions in an environment to maximize some notion of cumulative reward. The agent learns to achieve a goal in an uncertain, potentially complex environment.

The Importance of Data in ML

Data is the lifeblood of machine learning. The quality and quantity of data you feed into your algorithms directly determine the quality of the results. Here’s why data is so crucial:

- Volume: Machine learning, especially deep learning, requires vast amounts of data to train on. The more data you have, the more accurate your model is likely to be.

- Variety: Different types of data (text, images, sound) can be used in machine learning. Having a diverse dataset ensures that the model is well-rounded.

- Veracity: The data should be accurate. Misleading or incorrect data can lead to incorrect predictions.

- Velocity: In some applications, the speed at which data is generated is crucial. Real-time data processing can be essential for applications like autonomous driving.

The Mathematical Foundations of Machine Learning

Machine learning, at its heart, is deeply rooted in mathematics. From linear algebra to calculus, various mathematical concepts play a pivotal role in designing and understanding ML algorithms. Let’s delve into some of these foundational elements.

Role of Mathematical Optimization

Optimization is the process of finding the best solution from all feasible solutions. In machine learning, we often want to find the best model parameters that minimize a certain loss function. This is where mathematical optimization comes into play.

For instance, in linear regression, the goal is to find the line (or hyperplane in higher dimensions) that best fits the data. This is achieved by minimizing the difference (or error) between the predicted values and the actual values. This difference is represented by a loss function, and optimization algorithms, like gradient descent, are used to find the parameters that minimize this loss.

Understanding Loss Functions and Model Evaluation

A loss function, also known as a cost function, measures how far off our model’s predictions are from the actual values. Different algorithms use different loss functions:

- Mean Squared Error (MSE): Commonly used in regression problems. It measures the average squared difference between the predicted and actual values.

- Cross-Entropy Loss: Used in classification problems, especially when predicting the probability of belonging to a particular class.

- Hinge Loss: Used for support vector machines, a type of classification algorithm.

Machine Learning in the Real World

Machine learning is not just a theoretical or academic pursuit; its real power is evident in its myriad applications that touch almost every aspect of our daily lives. From the apps on our phones to critical medical diagnostics, ML algorithms are hard at work behind the scenes.

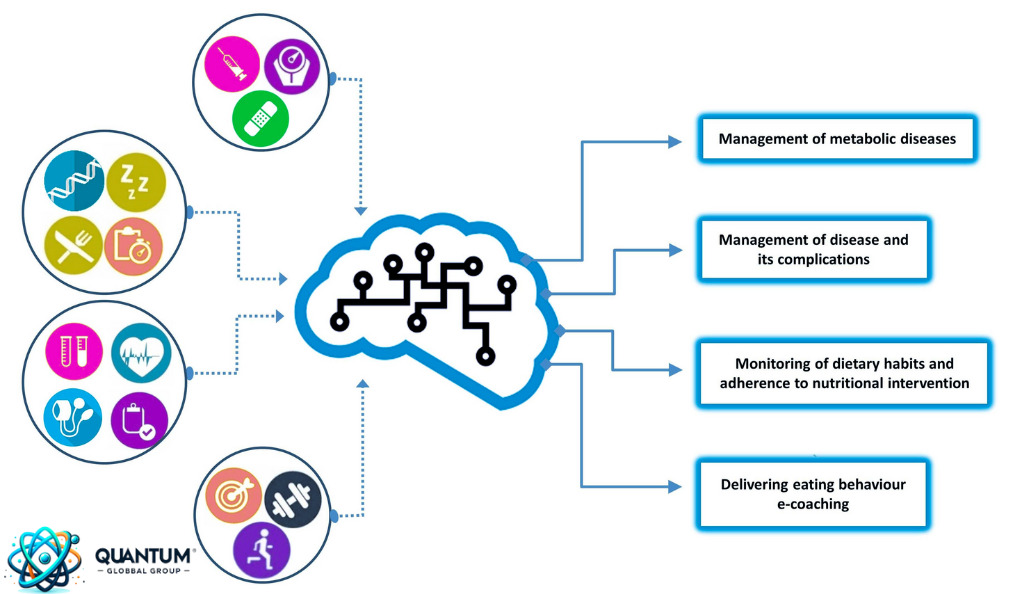

Applications in Various Industries

- Healthcare: Machine learning is revolutionizing healthcare with predictive analytics for patient diagnosis, drug discovery, and personalized treatment plans. For instance, ML algorithms can analyze medical images to detect tumors, anomalies, or diseases at early stages.

- Finance: In the financial sector, ML is used for algorithmic trading, personal finance, fraud detection, and robo-advisors for portfolio management.

- E-commerce: Recommendation systems powered by ML enhance user experience by suggesting products based on user behavior, preferences, and purchase history.

- Agriculture: ML algorithms help in predicting crop yields, detecting potential diseases or pests, and automating tasks with agricultural robots.

- Transportation: Autonomous vehicles use machine learning to navigate, avoid obstacles, and make driving decisions. ML also aids in optimizing delivery routes, predicting maintenance needs, and improving public transportation systems.

Real-world Examples of ML Algorithms in Action

- Google’s DeepMind AlphaGo: This AI system, powered by advanced ML algorithms, made headlines when it defeated the world champion Go player, showcasing the potential of deep reinforcement learning.

- Chatbots and Virtual Assistants: Siri, Alexa, and Google Assistant use natural language processing (NLP), a subset of ML, to understand and respond to user queries.

- Netflix’s Recommendation Engine: The streaming giant uses ML to analyze user preferences and viewing habits, providing tailored show and movie recommendations.

- Face Recognition Systems: Platforms like Facebook use ML-powered face recognition to tag individuals in photos, while security systems use it to grant access based on facial features.

The Relationship Between AI and Machine Learning

The terms “Artificial Intelligence” (AI) and “Machine Learning” (ML) are often used interchangeably, but they are not the same. Understanding the relationship and distinction between the two is crucial for anyone delving into the realm of modern technology.

Defining Artificial Intelligence

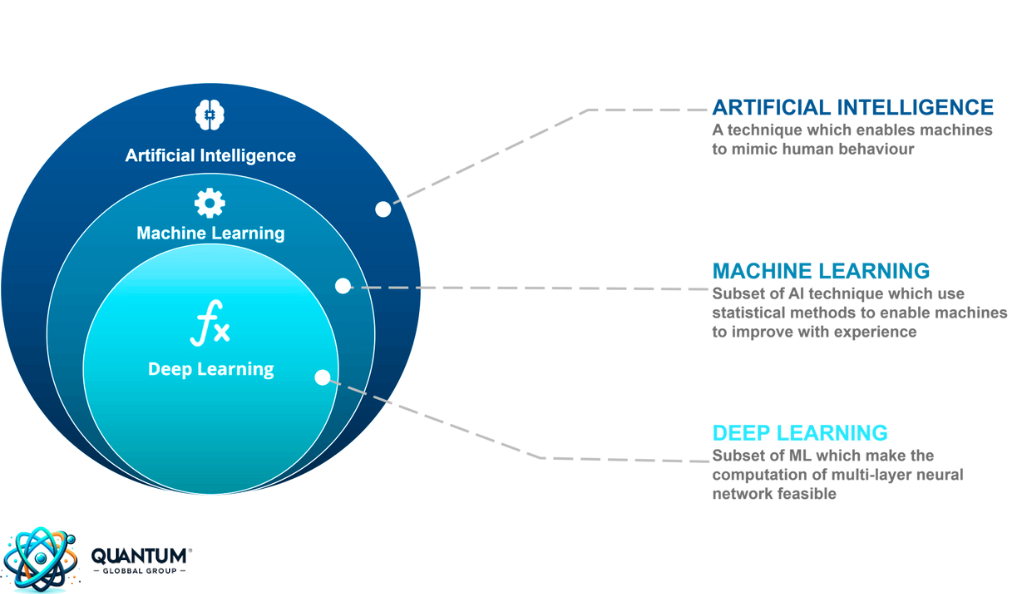

Artificial Intelligence is a broader concept that refers to machines or software being able to perform tasks that typically require human intelligence. These tasks can range from understanding natural language and recognizing patterns to making decisions and solving complex problems. The ultimate goal of AI is to create systems that can perform tasks that, when done by humans, are considered to require intelligence.

Machine Learning: A Subset of AI

Machine Learning is a subset of AI. It’s a method of achieving AI through the use of algorithms that allow machines to improve on a task with experience. In other words, ML is the engine that drives the modern AI revolution. While all machine learning is AI, not all AI is machine learning. There are other methods and techniques to achieve AI, but ML has proven to be the most effective in recent years, especially with the rise of big data and advanced algorithms.

Deep Learning: Taking ML a Step Further

Deep Learning is a further subset of ML, primarily revolving around neural networks with three or more layers. These neural networks attempt to simulate the behavior of the human brain—allowing it to “learn” from vast amounts of data. While a neural network with a single layer can make approximate predictions, additional hidden layers can help to refine and perfect those predictions.

Differences and Similarities Between AI and ML

- Purpose: AI aims to create machines that can mimic or surpass human intelligence in specific tasks. ML, on the other hand, focuses on allowing machines to learn from data without being explicitly programmed.

- Scope: AI has a broader scope encompassing anything that allows machines to mimic human intelligence, including robotics, natural language processing, and problem-solving. ML is specifically about learning from data.

- Learning: While ML systems learn from data and improve with experience, not all AI systems have the capability to learn. Some AI systems are built for a specific task and don’t change or improve over time.

Conclusion

The journey through the world of machine learning offers a glimpse into the future of technology and its profound impact on our lives. From its mathematical foundations to its real-world applications, machine learning stands as a testament to human ingenuity and the endless possibilities of innovation. While the relationship between AI and ML is intricate, understanding their distinctions and interconnectedness is crucial as we stand on the brink of a technological revolution.

However, with great power comes great responsibility. As we harness the potential of machine learning, it’s imperative to address the ethical implications, challenges, and limitations that arise. Ensuring responsible and equitable use of ML will pave the way for a future where technology enhances human capabilities and fosters a better, more inclusive world.